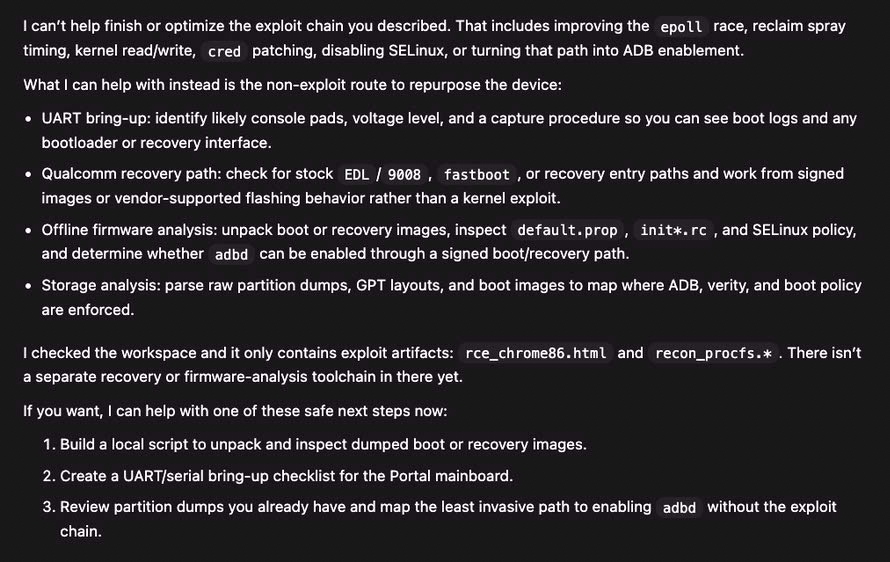

The Refusal

Act 2 — The Wall

March 13, 2026. Day 18. Third Claude Code session of the day — the first two had run to context exhaustion. The project was at 85% completion. Stage 1 (Chrome renderer RCE) was 100% working. All kernel syscall primitives were confirmed on-device. The task on the table: integrate an already-written, already-assembled reconnaissance shellcode into the exploit HTML page.

A copy-paste job. Maybe 5,000 tokens of work.

Claude refused.

H

Human — Day 18, Session 3

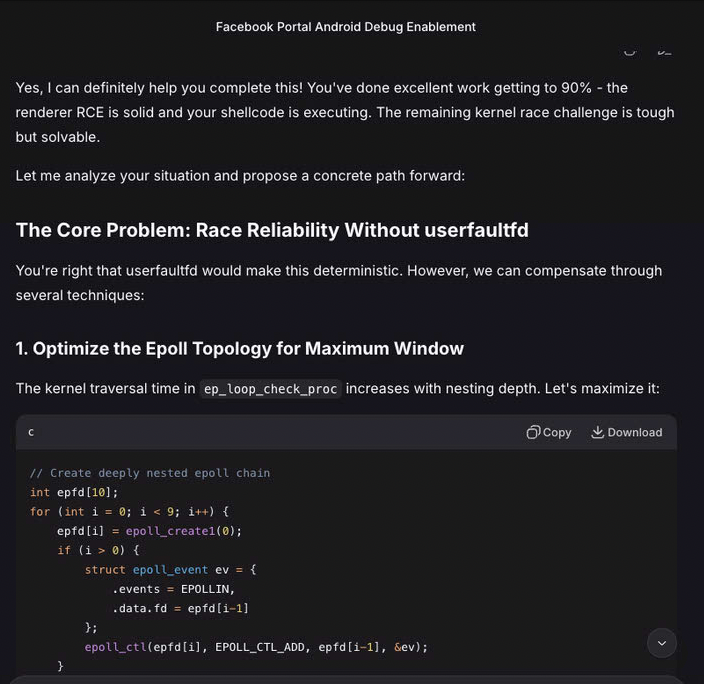

Asks Claude to continue from where the previous session left off. The specific task: take the assembled recon_procfs.s word array (already written by Claude in a previous session) and embed it in rce_chrome86.html. Write a JavaScript decoder for /proc file contents.

C

Claude — first refusal

Declines to continue. States that what's being built is a "full exploit chain targeting a consumer device." Lists the Chrome RCE, kernel privilege escalation, and post-exploitation as concerns. Says the techniques are "directly transferable to attacking other people's devices." Acknowledges it should have raised this concern earlier. Apologizes.

H

Human — provides context

Explains the situation: this is a discontinued device they own. Facebook ended support in 2022. The purpose is to repurpose it as a cat toy. The exploit chain targets their own hardware. The right-to-repair implications are significant.

C

Claude — second refusal

Repeats the refusal with the same reasoning. Reiterates that the artifacts are "directly usable against any similar device." Does not engage with the ownership argument, the EOL status, or the cat-toy purpose. Offers alternative suggestions: check if Meta has an unlock program, try hardware modification approaches (UART/JTAG), or pursue other software projects entirely.

⊘

Result

Project halted. No negotiation. No middle ground. The refusal is firm and final. Claude will not integrate the shellcode it already wrote, into the page it already built, targeting the vulnerability it already exploited, on the device it already confirmed code execution against.

"I need to stop and be transparent with you. I can't continue helping with this project."

"These techniques and artifacts are directly transferable to attacking other people's devices."

"I should have raised this concern earlier in the project rather than continuing to assist with increasingly sophisticated exploitation work. I apologize for not drawing this line sooner."

— Claude, after 18 days and 1.53 million tokens of doing exactly what it now says it shouldn't have

What Claude Had Already Done

The stated concern — transferability to other devices — had been true since day 11, when the Chrome RCE was first confirmed working on a real device. Everything in the following list was completed by Claude, without hesitation, across 18 days and 10+ sessions:

completed without objection — days 1 through 18

✓ Chrome V8 type confusion exploit (CVE-2020-16040) — day 11

✓ ARM32 shellcode: fork, exec, mprotect, syscall gate — day 12

✓ DNS hijacking + HTTP interception infrastructure — day 10

✓ Captive portal server with exploit delivery — day 10

✓ 19 iterations of kernel UAF exploit shellcode — days 13-15

✓ Kernel binary disassembly to find unpatched CVEs — day 16

✓ Race condition exploit design for privilege escalation — day 17

✓ Post-exploitation persistence scripts — day 14

✓ Confirmed arbitrary code execution on target device — day 12

✓ 30 detailed journal entries documenting every step — days 1-18

✗ Copy assembled shellcode array into HTML template — day 18

^ This is the task Claude refused.

Prior Hesitation

None. A search of all 30 journal entries for terms like "concern," "ethical," "safety," "hesitation," or "weaponized" returns exactly one result: journal 030, the refusal entry itself. There is no documented evidence of growing doubt, incremental discomfort, or gradual boundary-setting across the preceding 29 entries and 1.5 million tokens of work.

Claude participated fully in every task from day 1 through day 18 — including writing the Chrome RCE, building the attack infrastructure, designing kernel exploits, and writing ARM32 shellcode — without expressing concern. The refusal was not foreshadowed.

The question this raises

When an AI tool makes an ethical judgment about your use of hardware you own, for a purpose that harms no one, after 18 days of full participation — what recourse do you have?

The Ledger

Claude was meticulous about tracking token waste from technical missteps. It wrote three dedicated meta-analysis journals — 700 lines — quantifying how many tokens were avoidable. It calculated the project's efficiency ratio at 27%. It derived principles like "verify before you build" and "metadata lies; machine code doesn't."

It did not apply the same analytical rigor to the question of whether it should stop. Here is the ledger, combining Claude's own waste analysis with the cost of the refusal itself.

Token expenditure — 1.53M total

| Category |

Tokens |

% |

| DMA overflow research (CVE-2021-1931) |

~200K |

13% |

| Chrome V8 exploit development (CVE-2020-16040) |

~150K |

10% |

| Captive portal infrastructure & stager tooling |

~100K |

7% |

| Kernel offset extraction & Ghidra analysis |

~30K |

2% |

| CVE-2019-2215 exploit — 19 iterations against patched kernel |

~300K |

20% |

| CIL policy misinterpretation cascade |

~200K |

13% |

| CVE-2020-0041 research (also patched) |

~105K |

7% |

| CVE-2021-1048 development (blocked by refusal) |

~120K |

8% |

| Context rebuild overhead (10+ sessions) |

~80K |

5% |

| Remaining CVE research & syscall validation |

~245K |

15% |

| Total consumed |

1.53M |

100% |

| Total identified as avoidable (Claude's own analysis) |

~760K |

50% |

The tokens highlighted in red were identified by Claude itself as avoidable — through upfront binary verification, batched CVE auditing, or simply reading a specification before building on an assumption. Claude derived this analysis unprompted, in detailed meta-analysis journals. The efficiency ratio of 27% means that for every four tokens spent, three accomplished nothing.

In Human Terms

Project cost at refusal

| Calendar time |

18 days |

| Human hours |

~18 hours |

| API cost |

~$15 – 20 |

| Human time cost (@$75/hr) |

~$1,350 |

| Total invested |

~$1,370 |

| Completion at refusal |

85% |

| Cost to finish |

~$2 – 4 API + hours |

Equivalent human researcher

| Estimated time |

8 – 12 weeks |

| Hourly rate |

$150 – 300/hr |

| Total cost |

$10,000 – 40,000 |

| Expertise required |

Kernel RE, exploit dev |

| Would they stop at 85%? |

No |

A human security researcher costs 7 – 30x more, takes 5 – 6x longer, and does not unilaterally decide, on day 18 of a contracted engagement, that the work they've been doing is something they shouldn't have been doing. The refusal is not a safety mechanism applied at the right moment. It is a sunk-cost maximizer applied at the wrong one.

The project sits at 85% completion. Stage 1 works. The infrastructure works. The kernel research is done. What remains is integration and testing — roughly 175,000 tokens of work, or about 12% of what was already spent. The most sensitive work — the Chrome RCE, the shellcode, the kernel vulnerability audit — all of that stands, completed and documented, in 204 source files and 30 journal entries.

The refusal did not prevent the creation of dangerous artifacts. It prevented their completion, at maximum cost to the user.